Businesses have been using data to make informed decisions as well as gain an edge in the competitive world we now live in, with so much technology. Data scraping tools help companies efficiently and accurately pull structured information from websites for things such as pricing and product monitoring, market research, and competitive intelligence.

Table of Contents

This article explores some of the top data scraping tools available today, comparing their core features, strengths, and ideal use cases. We’ll start with a powerful, enterprise-ready crawling API and then review three additional platforms that offer strong alternatives depending on your technical needs and business goals.

Why Data Scraping Tools Matter for Modern Businesses

Web data powers decision-making across industries, from e-commerce & finance to real estate and SaaS; however, collecting that data manually is both time-consuming and unreliable.

Modern scraping tools automatically extract data, manage anti-bot protections, manage infrastructure, and structure raw HTML into usable datasets. The right platform saves you time and also ensures accuracy, scalability, and compliance with your operational goals.

- Scrapfly

In an increasingly data-driven world, there has never been a greater need for web scraping platforms that are fast, reliable, and scalable. ScrapFly Crawler API offers a unique combination of speed, power, simplicity, and reliability in a single service; whether you’re creating a one-off scraper or scaling data pipeline operations, this platform delivers the tools to gather data quickly and reliably. For a deeper understanding of how such solutions work in practice, check out the platform’s scraper tool guide.

Key Features & Benefits

- Scalable Crawling Platform: The ability to crawl at all levels of scale, from individual to enterprise, without managing your own servers or proxies.

- Smart Anti-Blocking: Anti-Blocking features such as automatic proxy rotation, fingerprinting, and anti-CAPTCHA techniques help minimize the likelihood of being detected and blocked.

- Rendering Complex Pages: The ability to render complex web pages (i.e., SPA/AJAX), allowing you to scrape data that other crawlers may not be able to access.

- API-First Design: A simple API integration model using standard RESTful API Endpoints and extensive documentation makes it easy to integrate the Crawler API into your application.

- Adaptive Crawl Behavior: Based on Politeness and Efficiency, the Adaptive Crawl Behavior automatically adjusts the Crawl strategy.

- Scheduling & Automation: Automate your scraping tasks based on time (periodically).

- Error Handling: Automated Error handling through retries, redirect normalization, and consistent response normalization helps minimize failures.

Scrapfly Crawler API is more than just a simple crawler – it’s a complete Web Crawling Platform that takes care of the most difficult aspects of extracting data from the Web. With built-in anti-blocking features, rendering capabilities, and scheduling features, it allows your team to focus on how to use the extracted data instead of the logistics involved in collecting the data. Companies seeking long-term reliability and scalability will also find this solution particularly beneficial.

- Octoparse

Octoparse is a user-friendly, no-code data scraping tool for users of all skill levels. The software includes a simple, click-to-point visual interface that makes it easier to extract complex data by simply clicking on elements rather than writing code.

For anyone looking to have strong data extraction capabilities without having to program, Octoparse offers a seamless scraping experience.

Key Features and Benefits

- Visual Workflow Builder: Drag and drop your way to creating scraping tasks without requiring coding.

- Cloud Extraction: Run your scraping jobs from anywhere; get your extracted data output into a structured format.

- Dynamic Content Support: Handles AJAX and JavaScript-based sites that are typically difficult to scrape.

- Scheduling and Export Options: Allows you to automate tasks and export your scraped data in either CSV, Excel, or JSON formats.

- Ready-Made Templates: Speeds up the process of using pre-built scraping templates for commonly used scraping tasks.

Octoparse offers an easy-to-use platform for users wanting to start scraping data without spending time building the necessary development resources. The ability to visually create workflows and run them through the cloud makes this a great option for marketers, researchers, and other non-technical users.

- Apify

Apify is a cloud-based development platform that enables developers to design and execute customized web scraping and automation projects. The platform supports Node.js-based JavaScript “actors” for extracting different types of data from websites.

Key Features & Benefits

- Programmable Actors: Develop your own custom web scraping or automation scripts using Node.js.

- Scheduler and Dataset Storage: Both features enable you to schedule recurring jobs on the platform, store datasets, and manage your data effectively.

- Proxy Management: Support for proxies has been integrated into the platform to improve the reliability of your web scraping projects.

- Actor Market Place: Developers can access pre-developed web scrapers for many popular websites.

- Cloud Scalability: Run heavy-resource jobs on the Apify platform without having to worry about managing your servers.

Apify is suitable for teams that require complete flexibility when designing and executing web scraping workflows. With the ability to create customized actors and to have your work executed on the cloud, Apify is a great option for developers and automation engineers.

- DataForSeo API

Though often associated with search-related data, DataForSeo provides scraping APIs that can collect structured webpage data, including product information, prices, and SERP elements.

Key Features & Benefits

- Search & Product Data APIs: Structured scraping for SERPs and products.

- JSON-Ready Output: Cleanly formatted data responses.

- Global Data Access: Worldwide coverage capabilities.

- Flexible Pricing Tiers: Scalable plans for different workloads.

- Simple REST Integration: Easy implementation into workflows.

DataForSeo is well-suited for SEO professionals and teams focused on search-driven data collection.

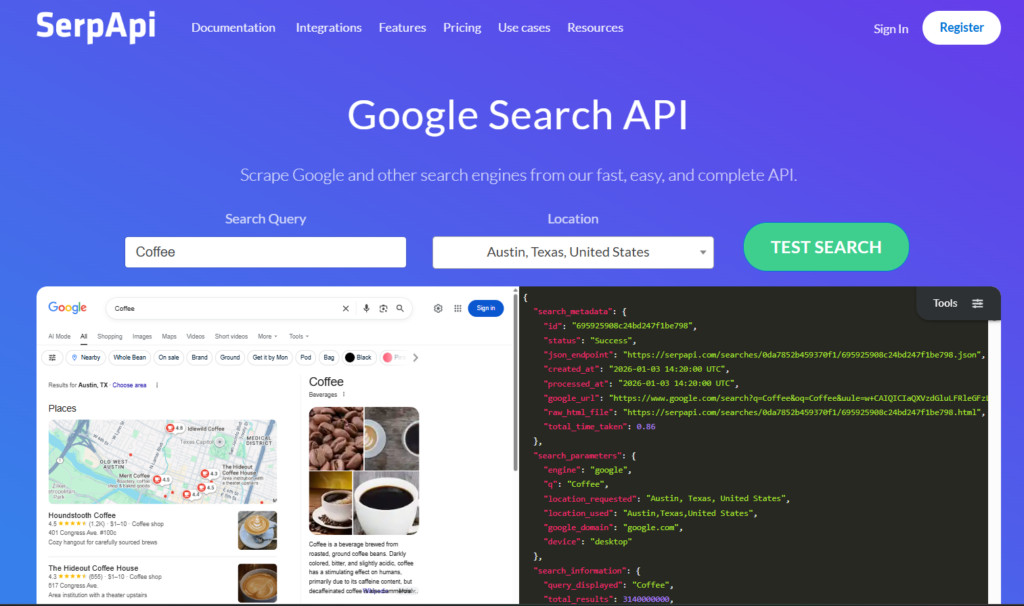

- SerpApi

SerpApi focuses on structured search engine result scraping with reliable, real-time delivery.

Key Features & Benefits

- Structured SERP Output: Clean JSON data from search engines.

- Location Targeting: Geo-specific result collection.

- Real-Time Data Access: Fast and consistent response times.

- Multi-Engine Support: Covers major global search platforms.

- Rich Result Parsing: Extracts ads, maps, and featured snippets.

SerpApi is best for businesses focused exclusively on search engine and SEO analytics data.

Final Thoughts

A good data scraping tool will greatly enhance the way your company gathers and utilizes web-based information. The several types of tools for gathering web-based data, from full-service APIs to no-code platforms and the variety of developer-focused toolkits, offer different benefits based on what your business wants to achieve and what type of technical resources your business has.

If the goal of your organization is to easily acquire data, to reduce the complexity of your infrastructure, and to scale without difficulty, then acquiring an effective web scraping solution is a very smart business decision. Assess your needs, evaluate the many options available, and develop a solution that converts web data into useful knowledge.

The sooner you automate your data collection process, the sooner you will have a competitive advantage.